proportion power calculation for binomial distribution (arcsine transformation)

h = 0.344372

n = 72.21261

sig.level = 0.05

power = 0.9

alternative = greaterSequential Testing for Experimental Design

Why Sequential Testing?

First, why should we do sequential testing? Why not just prespecify \(n\)?

Power Calculations

We can calculate the number of samples using a power calculation

(Boardwork)

Verify

Always Do Sequential Testing Theorem

There is a theorem, which is hard to write down exactly, but I call it the Always Do Sequential Testing Theorem Wald (1945).

If you pick \(n\) via a power calculation, on average you could have gotten away with fewer samples had you used a sequential test instead.

Uses of Modern Sequential Testing

Platforms like Statsig implement the SPRT and it is quite easy to use.

AI Deployment Monitoring

[LLM company logos: OpenAI, Anthropic, Google DeepMind, Meta AI]

- Monitor model quality after deployment

- Detect regressions in real time without waiting for a fixed \(n\)

Early Stopping for Clinical Trials

[Pharmaceutical company logos: Pfizer, Roche, Novartis, AstraZeneca]

- Stop early for efficacy or futility

- FDA-recommended group sequential designs

Problem: Need to Know Likelihoods!

The SPRT requires specifying \(P\) and \(Q\) — you need the distributions.

Recall our favorite Statistics Theorem: the CLT

\[\frac{\bar X_n - \mu}{\sigma/\sqrt{n}} \xrightarrow{d} N(0,1)\]

Testing to Confidence Sequences

A few things that aren’t very nice about the SPRT. It requires the analyst to:

- Know the distribution (and variance)

- Specify the null and the alternative

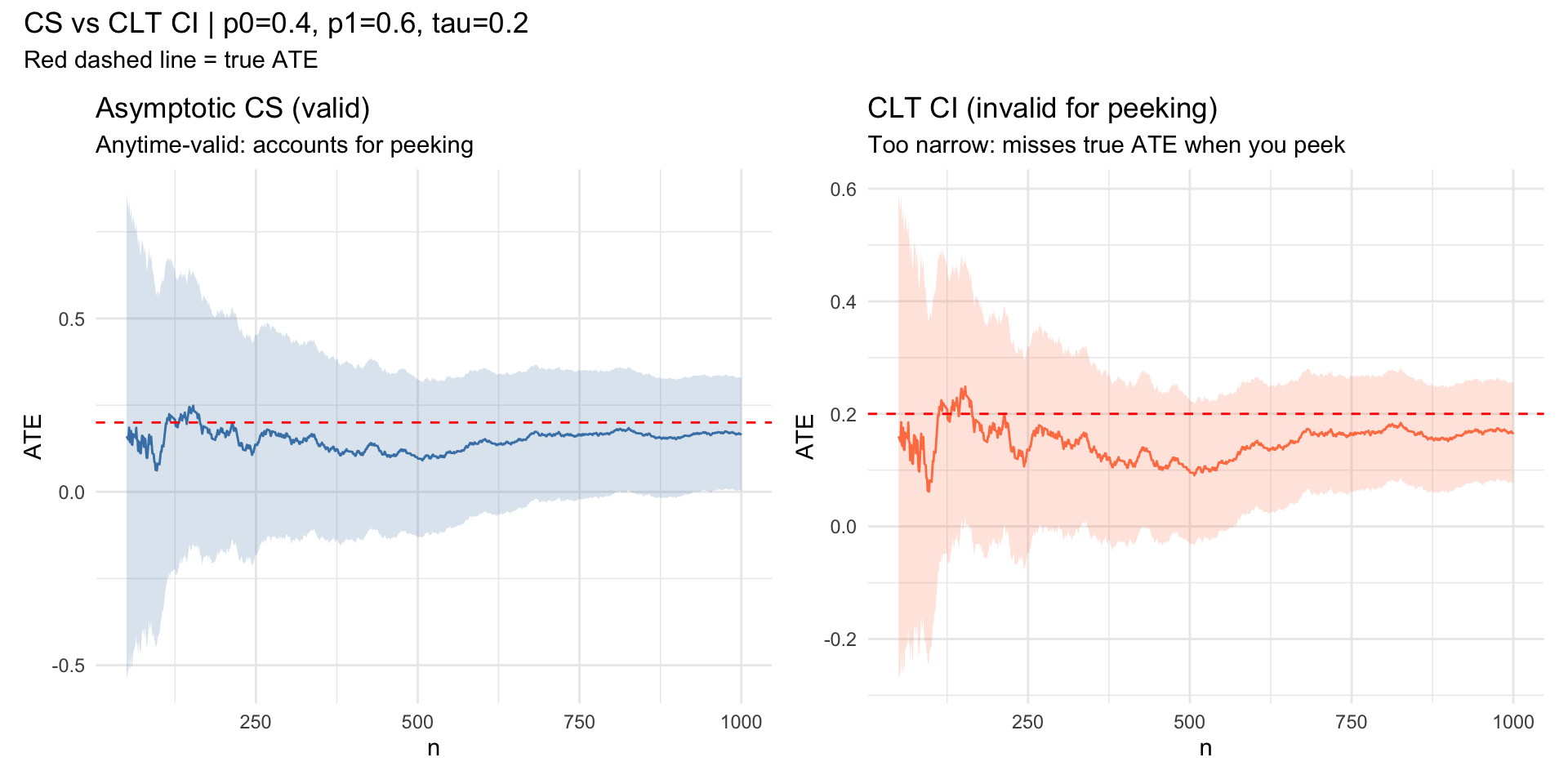

We’ll introduce confidence sequences Waudby-Smith et al. (2024) — the sequential analogue of confidence intervals that remain valid at all sample sizes.

Confidence Sequences vs. CLT CI

library(ggplot2)

library(patchwork)

# --- Parameters ---

n <- 1e3; p0 <- 0.4; p1 <- 0.6; e <- 0.5

tau <- p1 - p0

running_sd <- function(x) {

n <- seq_along(x)

M2 <- numeric(length(x))

mu <- cumsum(x) / n

for (t in 2:length(x)) {

d <- x[t] - mu[t - 1]

M2[t] <- M2[t - 1] + d * (x[t] - mu[t])

}

sqrt(pmax(M2 / pmax(n - 1, 1), 1e-10))

}

robbins_confseq <- function(x, alpha = 0.05, rho = 1) {

n <- seq_along(x)

mu_hat <- cumsum(x) / n

s_n <- running_sd(x)

radius <- s_n * sqrt((2 * (n * rho^2 + 1) / (n^2 * rho^2)) *

log(sqrt(n * rho^2 + 1) / alpha))

data.frame(lower = mu_hat - radius, upper = mu_hat + radius)

}

Z <- rbinom(n, 1, e)

Y <- ifelse(Z == 1, rbinom(n, 1, p1), rbinom(n, 1, p0))

phi <- Z * Y / e - (1 - Z) * Y / (1 - e)

cs <- robbins_confseq(phi)

ns <- 1:n

mu_hat <- cumsum(phi) / ns

clt_hw <- qnorm(0.975) * running_sd(phi) / sqrt(ns)

df <- data.frame(

t = ns,

ate = mu_hat,

cs_lo = cs$lower, cs_hi = cs$upper,

clt_lo = mu_hat - clt_hw,

clt_hi = mu_hat + clt_hw

)[50:n, ]

p1_plot <- ggplot(df, aes(t)) +

geom_ribbon(aes(ymin = cs_lo, ymax = cs_hi), alpha = 0.2, fill = "steelblue") +

geom_line(aes(y = ate), color = "steelblue") +

geom_hline(yintercept = tau, color = "red", linetype = "dashed") +

labs(x = "n", y = "ATE", title = "Asymptotic CS (valid)",

subtitle = "Anytime-valid: accounts for peeking") +

theme_minimal()

p2_plot <- ggplot(df, aes(t)) +

geom_ribbon(aes(ymin = clt_lo, ymax = clt_hi), alpha = 0.2, fill = "coral") +

geom_line(aes(y = ate), color = "coral") +

geom_hline(yintercept = tau, color = "red", linetype = "dashed") +

labs(x = "n", y = "ATE", title = "CLT CI (invalid for peeking)",

subtitle = "Too narrow: misses true ATE when you peek") +

theme_minimal()

p1_plot + p2_plot +

plot_annotation(

title = sprintf("CS vs CLT CI | p0=%.1f, p1=%.1f, tau=%.1f", p0, p1, tau),

subtitle = "Red dashed line = true ATE"

)

Miscellaneous

Group Sequential Designs are recommended in FDA regulatory settings and popular in industry.

- The package

gsDesignis the go-to package for this method - Forces the analyst to pick interim analysis times

- My current research is related to these methods

- The package